Microservices Architecture

A microservices architecture is a software architecture approach in which a set of software applications designed for a limited scope, known as “microservices,” work together to form a bigger solution. Each microservice, as the name implies, has minimal capabilities to create a very modularized overall architecture. Microservices architecture is analogous to a manufacturing assembly line, where each microservice is like a station in the assembly line. Just as each station is responsible for one specific task, the same holds for microservices. Each station and microservice are “experts” in their responsibilities, thus promoting efficiency, consistency, and quality in the workflow and the outputs. Contrast that to a manufacturing environment where each station is responsible for building the entire product. This is analogous to a monolithic software application that performs all tasks within the same process.

How Do Microservices Architectures Work?

A microservices architecture is a design pattern in which each microservice is just one small piece of a bigger overall system. Each microsystem performs a specific and limited-scope task that contributes to the result. Each task could be as simple as “calculate the standard deviation of the input data set” or “count the number of words in the text.” The key behind building microservices is planning the system to identify the distinct subtasks and then writing applications that address each subtask. As each microservice needs to deliver output data to the next microservice, a microservices architecture often uses a lightweight messaging system for that data handoff.

A microservices architecture is advantageous over a monolithic architecture for several reasons. Microservices are easier to build (because of their smaller size), deploy, scale (as more microservices can be added to any step to run in parallel), maintain, etc. Microservices are not new, as the concept has roots in older design patterns such as service-oriented architectures (SOA), modularity, and separation of concerns. However, microservices represent a new way of architecting large-scale systems that could otherwise be more difficult to manage in a traditional monolithic architecture.

In general, there are no specific requirements around building a microservices architecture, which gives application developers a lot of flexibility regarding programming language, technology environment, and deployment options. Specific requirements often drive the choice of technology, and when high performance and low latency are required, an in-memory processing engine is typically used. Engines like Hazelcast Engine leverage the power of in-memory processing to build and run extremely fast microservices in environments that need to react quickly to huge volumes of data.

Difference Between Microservices and Monolithic Architectures

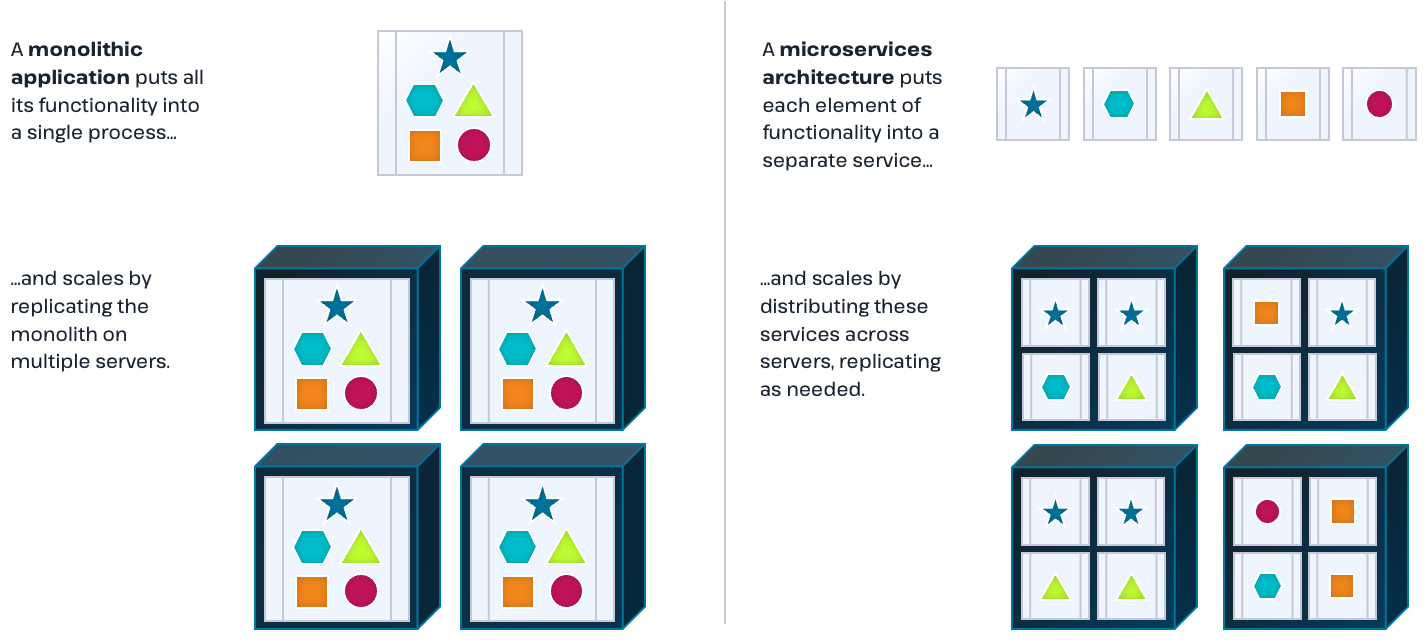

The key difference between a monolithic and a microservices architecture is that a monolithic program is a single, unified unit. In contrast, a microservices architecture comprises smaller, independently deployable services.

Each service in a microservices architecture design is in charge of a certain element or function. Since only the service that requires modification needs to be changed, updating or modifying individual services becomes simpler. Microservices are also easy to scale because each service can grow or shrink without affecting the other services.

A monolithic program tightly integrates all code, data, and settings. This close coupling makes it challenging to modify or update the application because developers must apply any changes to its various components. Additionally, scaling monolithic applications can be difficult since teams need to implement updates across all instances of the app.

Microservices Architecture Example Use Cases

Microservices architecture proves especially beneficial for use cases that involve extensive data pipelines. For instance, in a reporting system for a company’s retail store sales, each step of the data preparation process—such as data collection, cleansing, normalization, enrichment, aggregation, and reporting—gets managed by a separate microservice. This approach naturally enables the creation of a trackable lineage for the data. If any issues arise, you can easily identify which microservice needs updating.

Another use case entails machine learning (ML). A microservices-based ML environment collects, aggregates, and analyzes a data flow so that the ML framework can determine an outcome. In such an environment, the data runs through a workflow with many steps, and a microservice handles each step. One advantage of using a microservices architecture for machine learning is that it lets you incorporate multiple machine learning frameworks within the workflow. This approach enables you to create various models from the same data flow. It proves useful when different machine learning frameworks aim to target a specific predicted outcome. By running multiple frameworks simultaneously, you can compare which model delivers the best results.of which model delivers the best results.