What Is a Cache Miss?

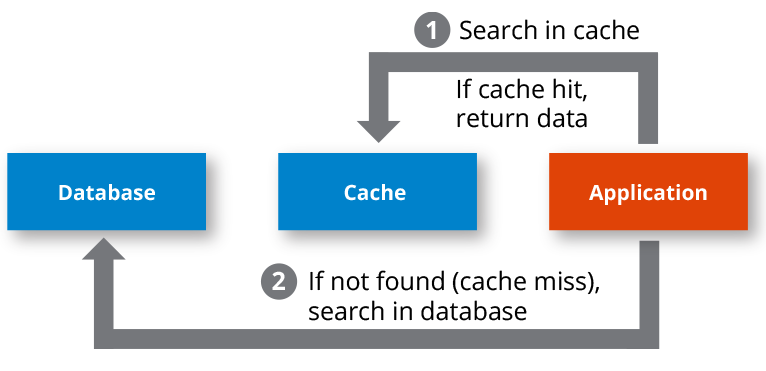

A cache miss is an event in which a system or application makes a request to retrieve data from a cache, but that specific data is not currently in cache memory. Contrast this to a cache hit, in which the requested data is successfully retrieved from the cache. A cache miss requires the system or application to make a second attempt to locate the data, this time against the slower main database. If the data is found in the main database, the data is then typically copied into the cache in anticipation of another near-future request for that same data.

A cache miss occurs either because the data was never placed in the cache, or because the data was removed (“evicted”) from the cache by either the caching system itself or an external application that specifically made that eviction request. Eviction by the caching system itself occurs when space needs to be freed up to add new data to the cache, or if the time-to-live policy on the data expired.

A cache miss represents the time-based cost of having a cache. Cache misses will add latency that otherwise would not have been incurred in a system without a cache. However, in a properly configured cache, the speed benefits that are gained from cache hits more than make up for the lost time on cache misses. This is why it is important to understand the access patterns of the system so that cache misses can be kept relatively low. If your system tends to make multiple accesses of the same data in a short period of time, then the overall advantage of caches is realized.

-

A cache miss occurs when an application is unable to locate requested data in the cache.

What Happens on a Cache Miss?

When a cache miss occurs, the system or application proceeds to locate the data in the underlying data store, which increases the duration of the request. Typically, the system may write the data to the cache, again increasing the latency, though that latency is offset by the cache hits on other data.

How Can You Reduce the Latency Caused by Cache Misses?

To reduce cache misses and the associated latency, you need to identify strategies that will increase the likelihood that requested data is in the cache. One trivial but often impractical way to reduce cache misses is to create a cache that is large enough to hold all data. That way, all data in the underlying store can be placed in the cache so there is never a cache miss, and all data accesses are extremely fast. This is often impractical for large data sets, as the cost of having sufficient amounts of random-access memory (RAM) may not justify the advantage of having zero cache misses.

There are other ways to reduce cache misses without having a large cache. For example, you can apply appropriate cache replacement policies to help the cache identify which data should be removed to make space for new data that needs to be added to the cache. These policies entail removing the data that is least likely to be accessed again in the near future. Some example policies include:

- First in first out (FIFO). This policy evicts the earliest added data entries in the cache, regardless of how many times the entries were accessed along the way. This policy is especially useful when the data access patterns go through a progression through the entire data set, so at some point, the earlier data in the cache will no longer be accessed while newly added data will more likely be multiply accessed.

- Last in first out (LIFO). This policy evicts the latest added data entries in the cache. This is ideal when systems tend to access the same subset of data very frequently. This subset will tend to stay in the cache, thus continuously giving the system fast access to that subset.

- Least recently used (LRU). This policy evicts the data in the cache that was last accessed the longest time ago. This is good in environments where data that has not been accessed in a while is likely not going to be accessed again, so conversely, recently accessed data is likely to be accessed again.

- Most recently used (MRU). This policy evicts the data that is most recently accessed in the cache. This is good for environments where the older the data is in the cache, the more likely it will be accessed again.

When using the optimal policy for your environment, you can store a subset of your entire data set in the cache while still gaining significant performance despite the cache misses. Certainly, these policies work better as your cache size increases, which is why technologies like distributed caches are extremely important today. The ability to grow a cache by adding more nodes to a caching cluster represents an economical way to accommodate expanding loads. In-memory technologies like Hazelcast are used to build large distributed caches that accelerate applications that leverage massive data sets.