Ellie Mae Chooses Hazelcast for its Stability, Performance, Durability, and Scalability

Industry

Business automation software

Year Founded

1997

Product

Hazelcast Platform

Since its foundation in 1997, Ellie Mae has become the leading provider of end-to-end business automation software for the U.S. mortgage industry, facilitating the process of originating and funding mortgage loans so lenders can achieve compliance, quality, and efficiency. Ellie Mae serves banks, credit unions and mortgage companies of all sizes providing an all-in-one, fully integrated solution that covers the entire loan lifecycle. Ellie Mae provides one system of record so loan providers can close high-quality, compliant loans more efficiently, no matter what the industry or regulators do next.

Encompass® is an end-to-end solution delivered using a software-as-a-service (SaaS) model that serves as the core operating system for mortgage originators. Encompass spans customer relationship management, loan origination,and business management.

Ellie Mae also provides an integrated network that allows mortgage professionals to conduct electronic business transactions with mortgage lenders and settlement service providers who process and fund loans. According to estimates, more than 20% of all mortgage originations in the U.S. flow through the Ellie Mae network.

The Challenge

Before Ellie Mae adopted Hazelcast, its Encompass application was suffering from two major problems: performance and scalability.

Encompass could not function within the approved service level agreements. The performance was compromised by the disk-based access methodology of its database vendor, resulting in high latencies and lower throughput. In order to overcome these performance-related issues, Ellie Mae made several attempts at caching frequently used data in the Encompass application’s memory with a homegrown caching solution, but it could not succeed because this prevented horizontal scaling due to data inconsistency across application nodes. Ultimately the homegrown solution was too burdensome to maintain, as it was unable to scale due to synchronization and consistency issues across all nodes. This internal solution did not guarantee high availability, resulting in failed SLAs.

Scalability was the other major challenge, as Ellie Mae was expecting its business to grow by 25% to 30% every year, and its current application memory setup was not a scalable model. This would potentially have resulted in significant losses.

Solution Requirements

Ellie Mae was looking for a distributed solution that was not only scalable on-demand to cater to its constantly growing business but was also a high-performance system with cross-platform support, such as .Net/Java. Ellie Mae evaluated the major solution providers in the market, including Hazelcast, with stringent requirements around data lifetime validity and eviction, notifications, and flexible cluster-wide data consistency in mind.

From a performance point of view, Ellie Mae was looking for a system that was capable of running in a multitenant infrastructure where potentially hundreds of client nodes could connect to a distributed server cache and handle more than 28,000 concurrent transactions. This further meant storing more than 250,000 elements, which would make up to 100s of GBs of data in memory. That in-memory storage ensured the lowest possible latencies and high availability by keeping backup copies of original data in cache memory.

The Solution

Hazelcast replaced Ellie Mae’s homegrown caching solution to serve Ellie Mae’s constantly increasing business demands in highly available and consistent infrastructure. Ellie Mae’s engineering team experienced this as a seamless replacement with no significant architectural changes to the application.

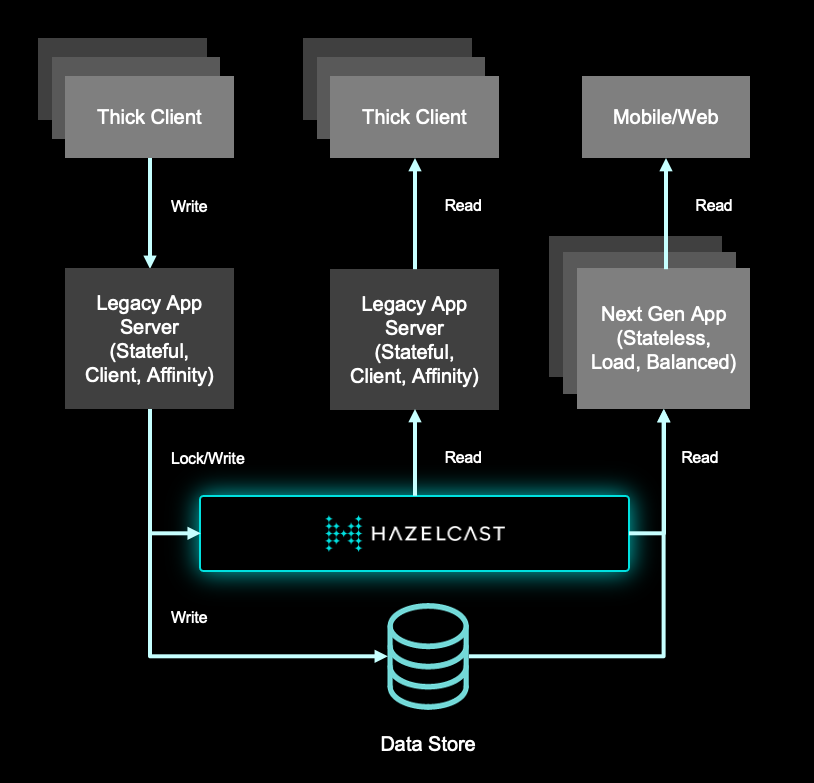

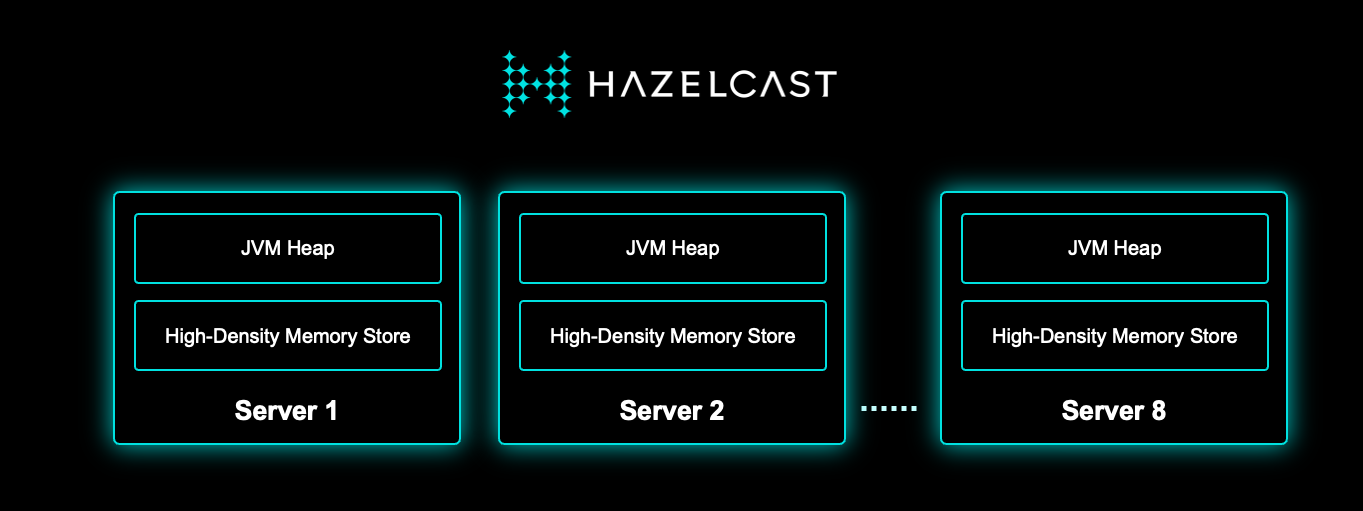

In the new architecture with Hazelcast, Ellie Mae’s Encompass application uses ~100 GBs of data stored in Hazelcast servers (see the diagram below). This allows application clients super fast access to data and increased throughput, resulting in overall increased performance. Storing such amounts of data in memory was made possible with Hazelcast High-Density Memory Store, which is a data store that lives outside the Java Virtual Machine (JVM) heap.

Hazelcast High-Density Memory Store addresses all trivial-garbage collector related problems by not allowing garbage collector to run in its store, which prevents application pauses and provides low latency and predictable access to the data. High-Density Memory Store capacity is only bounded by the amount of RAM a system has access to, eliminating the need for running JVMs with high heaps.

By using Hazelcast High-Density Memory Store, Ellie Mae made sure that it does not have to invest in expensive hardware to scale up the capacity to store data; it only needs to provision more RAM. Then a small change in Hazelcast configuration will allow it to store more data within the machine. Ellie Mae currently stores 250,000 objects and expects this number to grow manifold in the near future.

Another one of the most important aspects of deployment is the ease at which all of Ellie Mae’s .Net clients communicate with Hazelcast and data stored in Hazelcast High-Density Memory Store. The application in its new avatar enjoys fine-grain control over transactional consistency of data by using explicit Hazelcast lock APIs and also by using Hazelcast Backups to leverage high availability.

And the Hazelcast layer would look something like the following:

Reducing Total Cost of Ownership

Hazelcast provides all of the benefits of open source software including strong price performance, better ease of ownership, less vendor lock-in and a better vendor relationship. However, the total cost was reduced by much more than just the cost of the license.

One of the components of the Total Cost of Ownership (TCO) is the impact of good software on hardware costs. With the decreasing cost of RAM, in-memory solutions are now affordable for mass enterprise deployment. By optimizing the use of distributed clustered memory and CPUs, Hazelcast squeezes tremendous performance out of the cluster. Because disk access is about a thousand times slower than RAM access, the use of Hazelcast enables organizations to buy less hardware and use the hardware they have much more effectively.

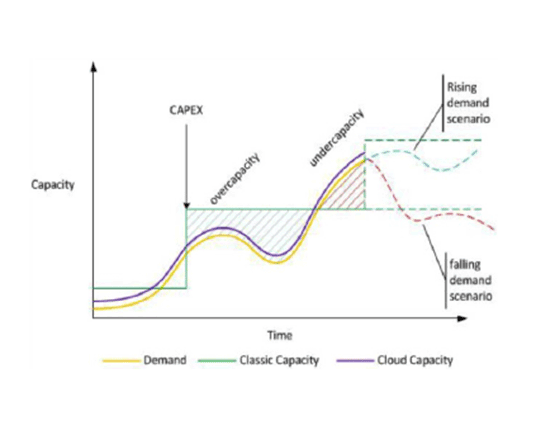

Ben Kepes, in his paper “Moving your Infrastructure to the Cloud: How to Maximize Benefits and Avoid Pitfalls,” describes how elasticity can change the cost of infrastructure. The speed and cost of provisioning and de-provisioning capacity can directly hit the bottom line due to the impact of overcapacity and under capacity. Hazelcast’s elasticity allows organizations to scale up and down quickly and dynamically.

Extensive Testing

Since serving the financial banking sector is the source of Ellie Mae’s revenue, Hazelcast was subjected to an unprecedented level of testing. Ensuring data consistency and availability was the only distinction between success and failure of the platform. Such was the scale of testing that Ellie Mae had its test suites running continuously 24/7 for weeks in its own highly capable infrastructure.

The tests involved overflowing and overloading every possible parameter and performing stress tests that would never be encountered under normal circumstances. The goal became not for the system to never fail but to determine how the system could be made to fail in dozens of scenarios with grace and without impact to the mission. These extraordinary tests uncovered some new scenarios that Hazelcast now handles gracefully. The whole exercise also helped Hazelcast’s .Net client to evolve into a much mature and stable offering.

By exceeding the capacity of the cluster, Hazelcast was stressed to evict unused data fast enough. Predicting performance under these extreme conditions was difficult to test because when the tests are made to represent a large amount of data amid ultra-complex business algorithms… sooner or later you’re bound to run out of memory. Hazelcast now passes all of these extreme cases and will be going into production on mission-critical systems.

Hazelcast Simulator

The Hazelcast Simulator was an important element in setting up Ellie Mae’s Encompass application to handle extreme data loading.

The Hazelcast Simulator is designed to stress test a Hazelcast environment with various data loads in order to verify its resilience to all kinds of problems, including:

- Catastrophic failure of the network, e.g. through IP table changes.

- Partial failure of the network by congestion, dropping packets, etc.

- Catastrophic failure of a JVM, e.g. by calling kill -9, or calling with different termination levels.

- Partial failure of JVM, e.g. send a message that it should start claiming 90% of the available heap and see what happens. Or send a message that a trainee should spawn a number of threads that consume all of the CPU. Or send random packets with huge size. Or open many connections to a Hazelcast Instance.

- Catastrophic failure of the OS (e.g. calling shutdown or calling an Amazon EC2 API to do a hard kill).

- Internal buffer overflow by running for a long time and at a high pace.

Hazelcast uses the Simulator internally to ensure that the products are all resilient in the most extreme conditions. The Simulator is an open-source offering by Hazelcast and is available to download and use under Apache license.

Customer Success

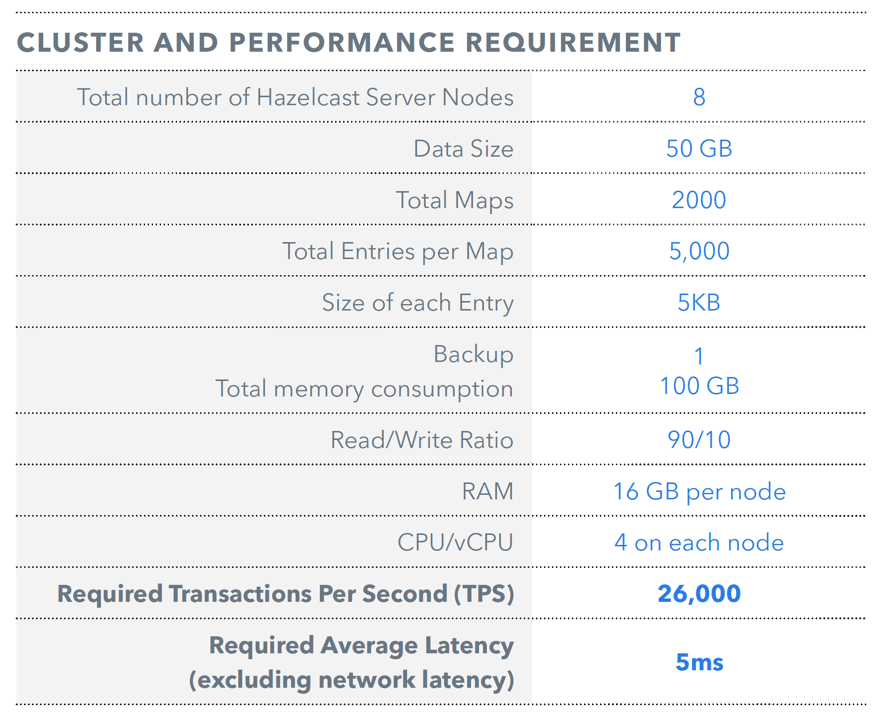

By using Hazelcast in its infrastructure, Ellie Mae ensured that it met its predefined SLAs of serving an application request within 5 milliseconds for a 5KB data element. Hazelcast consistently matched that SLA and scaled very well in both directions – vertically and horizontally – while maintaining the caching layer. This meant a considerably low load on the database and significantly high and stable performance with Hazelcast. Here are some numbers that explain the original cluster requirements from Ellie Mae and what Hazelcast was able to deliver.

By using Hazelcast in its infrastructure, Ellie Mae ensured that it met its predefined SLAs of serving an application request within 5 milliseconds for a 5KB data element. Hazelcast consistently matched that SLA and scaled very well in both directions – vertically and horizontally – while maintaining the caching layer. This meant a considerably low load on the database and significantly high and stable performance with Hazelcast. Here are some numbers that explain the original cluster requirements from Ellie Mae and what Hazelcast was able to deliver.

Below are the results of benchmarking with Hazelcast plugged into Ellie Mae’s application.

Test - C# client application sending put and get transactions to Hazelcast Servers:

- U 4 threads on each client U

- Average TPS per client = 4500 U

- Average TPS across 8 nodes cluster = 31,500 U

- Average latency < 2 milliseconds

Towards the end of benchmarking, the application was able to deliver a TPS of 45,000 (with more threads and more client application nodes) and a latency of < 2 milliseconds. This performance was linearly scalable with the addition of client nodes and it greatly exceeded Ellie Mae’s requirement of TPS and latencies. The highlight of this benchmarking was usage of High-Density Memory Store which resulted in ultra-low CPU usage and less than 3 GB of heap consumption on each of the server nodes. This yielded highly predictable latencies and SLA delivery in high 9s.