Simulator 0.6 released!

Today we released version 0.6 of the Hazelcast Simulator tool. It is our production simulator used to test Hazelcast and Hazelcast based applications in clustered environments.

Please read “Simulator 0.4 released” for a general introduction or have a look at the Hazelcast Simulator Documentation. You can download Hazelcast Simulator here.

Improved coordination of test workflow

In this release, we added new command line options to the Coordinator to control the test workflow of Simulator.

Synchronization of test phases

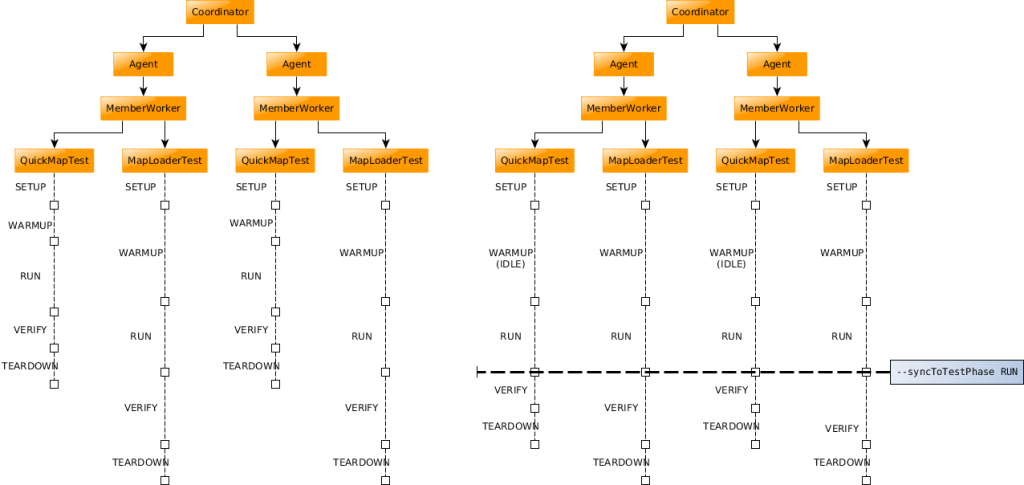

We added an option to control the synchronization of test phases (SETUP, WARMUP, RUN, VERIFY, TEARDOWN).

On the left side you see the old behavior for parallel running tests. Each test proceeds at its own pace. The test phases are just synchronized within the scope of the same test. On the right side you see the new behavior with the option --syncToTestPhase RUN. The test phases of all parallel running tests are synchronized up to the defined test phase. After that phase is completed the tests continue at their own pace.

Duration of RUN phase

We added a new option to define the duration of the RUN phase. The normal way is to pass a duration to Coordinator, e.g. --duration 5m. The new option let’s the Coordinator wait for the test to complete itself by adding the command line option --waitForTestCaseCompletion. If you combine both parameters the Coordinator will wait for the first thing to happen.

To stop a test, we added the methods stopWorker() and stopTestContext() to the AbstractWorker class. The first one just stops the actual worker thread, the second one stops the whole test instance on the member.

package com.hazelcast.simulator.tests;

import com.hazelcast.core.IMap;

import com.hazelcast.simulator.test.TestContext;

import com.hazelcast.simulator.test.annotations.RunWithWorker;

import com.hazelcast.simulator.test.annotations.Setup;

import com.hazelcast.simulator.worker.tasks.AbstractMonotonicWorker;

public class MapTest {

// properties

public int entryCount = 100000;

private IMap<Integer, Integer> map;

@Setup

public void setUp(TestContext testContext) throws Exception {

map = testContext.getTargetInstance().getMap(getClass().getSimpleName());

}

@RunWithWorker

public Worker createWorker() {

return new Worker();

}

private class Worker extends AbstractMonotonicWorker {

private int operationCount;

@Override

protected void timeStep() {

// each Worker thread will insert entryCount entries and then stop itself

if (operationCount++ >= entryCount) {

stopWorker();

return;

}

map.put(randomInt(), randomInt());

}

}

}

This new behavior is useful if you want to create a test which stops after e.g. 5 million operations, regardless of how long it takes.

Passive members

If you run client and member workers on the same machine the default behavior of Simulator is to put the member workers in a passive mode. Passive members skip their RUN phase (but not the other phases). If you want to execute your tests on all workers you can disable the passive mode by setting the property PASSIVE_MEMBERS=false in your simulator.properties.

External clients

Another addition to this release is basic support for external client. We used this internally to create a Simulator test for our C++ client, but you can use it as well if you want to run your own Hazelcast application in combination with a Simulator testsuite.

This can be useful if you plan to increase the load on your existing Hazelcast cluster (e.g. by using a new function of Hazelcast) and prototype that behavior as a Simulator test. You can also provide latency and throughput results from your application and they will be added to the Simulator performance reports.

Configuring an external application

We use a new Simulator test to start an external application or client.

- Put your application or binary file in a folder named upload in your working directory. Simulator will automatically upload those files to all members.

- Add and configure the test ExternalClientStarterTest to your Simulator testsuite (with the binary name, arguments, process count etc.). This starts your external application.

- Add and configure the test ExternalClientTest to your Simulator testsuite. This test keeps Simulator running until your external application sends a stop signal (using ICountDownLatch). After that, it collects your provided latency and throughput results (using IList).

Probe improvements

To be able to process external latency and throughput results the probes have been enhanced with some new methods.

recordValue(latencyNanos)forIntervalProbes to add a latency value of an external applicationsetValues(durationMs, invocations)forSimpleProbes to add throughput values of an external application.disable()to disable a specific probe instance, which prevents exceptions on collection of benchmark results, if e.g. just a single worker instance produced records.

Changes in Hazelcast tests

We added and enhanced several tests for Hazelcast.

- Added

GenericOperationTestto add pressure on generic operations (priority and normal). - Added

PagingPredicateTestto allow fine-tuning ofPagingPredicatetests without add noise to the existingMapPredicateTest. - Added

MapEvictAndStoreTestto test for duplicate store entries when eviction happens andMapStoreis slow (see Hazelcast issue #4448). - Added

map.loadAll()method toMapStoreTest. - Added

TransactionOptionsas property toMapTransactionGetForUpdateTest. - Variable byte value length in

IntByteMapTest.

Improvements

We improved the log messages on EC2 failures (display message instead of full stacktrace) and failures (added testId). We replaced StringBuffer with StringBuilder in util classes. We removed the --bindAddress option from Agent since it binds to 0.0.0.0 now. We moved the JCache generation to a helper class and used it in all JCache tests to remove duplicated code from a lot of tests.

We did a cleanup of KeyUtils class and its usage. We improved the test phase handling by introducing an enum for the test phases. We did a cleanup of a lot of test classes. We resolved mostly CheckStyle issues by re-writing the tests to use the @RunWithWorker annotation.

We reduced complexity of PropertyBindingSupport. The code coverage of WorkerPerformanceMonitor, WorkerMessageProcessor and WorkerCommandRequestProcessor has been increased. The code coverage of the probes module is now over 87.5%. Simulator is now compliant with our CheckStyle and FindBugs rules.

Fixes

We also fixed some smaller issues. The Java version in the project archetype was broken. We fixed a NPE in FileUtils if wildcard in classpath didn’t match anything. There was a NaN in PerformanceMonitor output if there are no performance numbers available. We also fixed an IllegalThreadStateException during WorkerPerformanceMonitor startup. During Agent shutdown there was a spuriously occurring InterruptedException in PerformanceThread.

Property binding with whitespaces works now. Test properties have to be public now to prevent static code analyzers to suggest a conversion to local variables. The ProbesResultXmlReader could not handle multiple probe results per file. We changed the HostAddressPicker to scan all interfaces before throwing an exception. We added a fallback to retrieve OperationService for a binary incompatible change in Hazelcast internals. We improved the stability of time based unit tests. We improved verify method of ExampleTest. Lastly, we renamed the Visualiser tool to Visualizer.

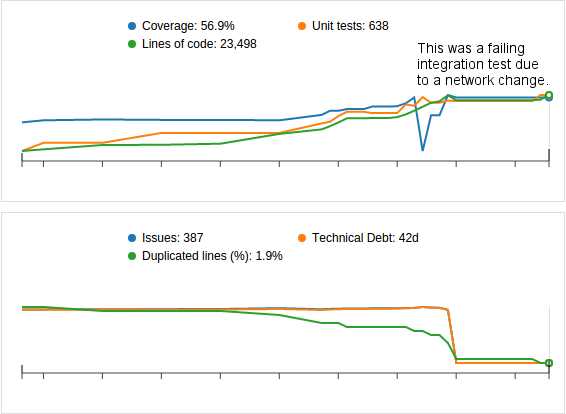

Code Quality

We increased code coverage by 5.9% and added 131 new tests. We resolved 1033 SonarQube issues, reduced the technical debt by 102 days and reduced the code duplication by 1.5%.

We configured more rules our SonarQube instance, which removed several hundreds of false positive issues (mostly due to our test API with public properties and “magic numbers”).

Try Simulator for yourself, get started today!